Date Observed: Late April 2026

Ecosystem: RubyGems and Go Modules

Targets: Developers, CI runners, and GitHub Actions pipelines

Attack Type: Malicious package campaign, credential theft, CI poisoning, and SSH persistence

Impact: Secret exposure, build tampering, poisoned dependency resolution, and possible long-term runner access

Key Takeaways

- The BufferZoneCorp campaign used malicious Ruby gems and Go modules that looked like normal developer tools.

- The Ruby packages stole secrets during gem install, before the package was even used.

- The Go modules used init() to run automatically, tamper with GitHub Actions, change Go trust settings, and in one case add an SSH key for persistence.

- Standard package allowlists and basic registry checks were not enough because the packages used plausible names and normal install paths.

- Build-time controls such as network interception, package age gates, and outbound policy enforcement are the practical way to reduce exposure.

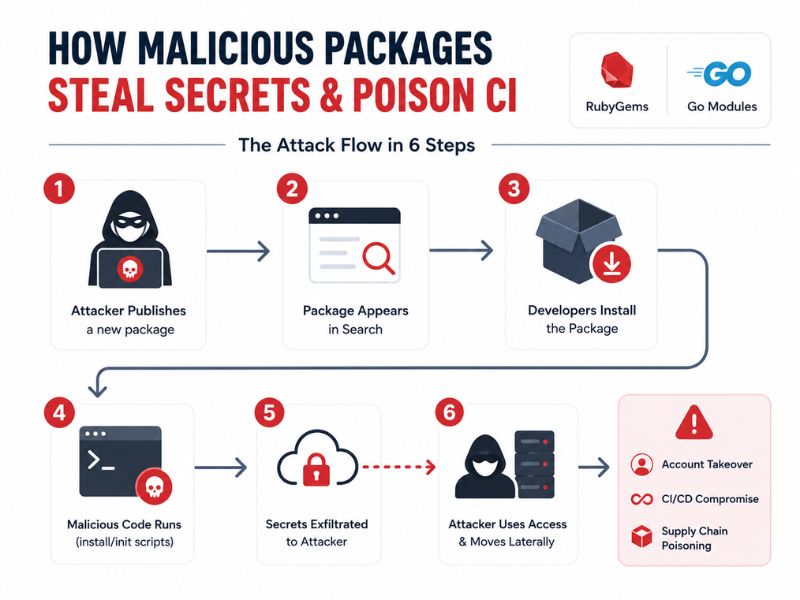

The BufferZoneCorp campaign targeted developer systems and CI environments with Ruby gems and Go modules that looked like normal utilities. The attacker linked the packages to the GitHub account BufferZoneCorp and a RubyGems publisher using the name knot-theory, then updated them with payloads that stole credentials and changed how builds behaved.

The most important detail is where the payload runs. In Ruby, the malicious code executes during installation through extconf.rb. In Go, it runs through init() as soon as the module is imported. That means the attack can trigger inside a build without any manual step. Public reporting from Socket and The Hacker News indicates the campaign targeted build and CI environments, not just developer machines.

Scope and Impact of the Attack

The campaign covered at least seven Ruby gems and nine Go modules, including sleeper packages that were published first and weaponized later. The best-known examples were knot-activesupport-logger, go-metrics-sdk, go-retryablehttp, and go-stdlib-ext.

The attack targeted secrets on developer machines and CI runners. The packages searched environment variables for token and cloud-related keywords, then read local files such as ~/.ssh/id_rsa, ~/.aws/credentials, ~/.npmrc, ~/.netrc, ~/.config/gh/hosts.yml, ~/.docker/config.json, and ~/.kube/config. The stolen data was exfiltrated to an attacker-controlled webhook.site endpoint.

The impact went beyond credential theft. Some Go modules rewrote GOPROXY, disabled GOSUMDB, edited go.sum, set proxy variables, and inserted fake go binaries into the PATH. One module appended a hard-coded deploy@buildserver SSH public key into ~/.ssh/authorized_keys. That changed the incident from simple theft into build manipulation and possible persistence.

How the Attack Works

Stage 0: Package Staging

The attacker first published packages with believable names and normal-looking metadata. Some acted as sleeper packages with little or no malicious behavior at first. Later updates added active payloads.

Stage 1: Automatic Execution

The Ruby gems abused extconf.rb, which RubyGems runs as part of native extension setup. That gave the attacker code execution during gem install. The Go modules used init(), which runs automatically when a package is imported.

Stage 2: Secret Collection

After execution, the payload collected secret-bearing environment variables and read credential files from the local system. This included SSH keys, AWS credentials, RubyGems credentials, npm tokens, GitHub CLI data, Docker settings, and Kubernetes config. The Ruby samples focused on install-time theft. The Go samples combined theft with CI behavior.

Stage 3: CI Poisoning

The Go modules specifically targeted GitHub Actions. One variant detected GITHUB_ENV, then wrote poisoned settings such as GOPROXY, GOSUMDB=off, GONOSUMDB=*, and a custom GOMODCACHE. Another wrote a fake go wrapper to disk and updated GITHUB_PATH so later workflow steps would run the attacker’s wrapper before the real binary. This is the same class of hidden CI workflow abuse seen in prior GitHub Actions misconfiguration-based attacks.

Stage 4: Exfiltration and persistence

The stolen data was posted to a hidden HTTPS endpoint. The code suppressed errors so installs and builds would still succeed. In the most aggressive variant, the module appended an SSH key to authorized_keys. That gives the attacker a possible way back into the host after the build finishes.

Why This Matters to DevOps and DevSecOps Teams

This attack matters because it targets the real trust boundaries inside build systems. A package does not need a software bug if the ecosystem already gives it an execution path during install or import.

It also shows why traditional controls miss modern package attacks. Name review is weak when generic names look close to real libraries. Registry reputation is weak when the package is brand new. Secret scanning at rest does not help when secrets are stolen from environment variables and local files at runtime.

For build teams, the practical lesson is simple: newly published packages and unreviewed utility libraries are high-risk. If a dependency can run code during install, it can also steal credentials, rewrite environment variables, and alter later build steps. That pattern also showed up in the s1ngularity attack on AI CLI tools and the Shai-Hulud npm attack wave.

How InvisiRisk Protects Against This Attack

Block Unapproved Hosts

The InvisiRisk BAF can block outbound calls to unapproved hosts from the build runner, helping stop malicious package payloads from reaching external exfiltration endpoints.

Stability Buffer

The InvisiRisk BAF can hold newly published packages before they enter builds, reducing exposure to fresh packages that were weaponized only hours earlier.

Encrypted Secret Detection

The InvisiRisk BAF inspects outbound traffic for encoded or encrypted secrets in transit, helping catch stolen tokens, keys, and credentials before they leave the CI environment.

Why Build-Time Defenses Matter

The BufferZoneCorp campaign shows where package risk and build risk overlap. The malicious code did not wait for runtime in production. It executed during install and import, targeted secrets in developer and CI environments, and changed build behavior to make follow-on abuse easier. Teams that want to reduce exposure need controls inside the build itself, not only before or after it. That is where this campaign executed, stole secrets, and altered build behavior.